AR Training for Metal Additive Manufacturing

AR Training

Augmented Reality AI powered Text Recognition Object Detection Personalized Feedback

Objective

Simplification of the training process, reduce associated costs and timeframe and Versatile to work with multiple metal 3D printers and AR headsets

Overview

To create a system for standardizing the workflow in Metal Additive Manufacturing (AM) in order to efficiently train new operators.

Challenges

- Metal AM has developed rapidly over the last few decades and is capable of producing parts with intricate geometries and detail unobtainable by CNC machining and die casting. Major industries are investing in metal 3D printers but are facing difficulties in finding trained/skilled operators. Training can cost weeks of time and thousands of dollars. Lack of skilled operators can also be attributed to lagging educational systems compared to AM technology. In light of this, it was necessary to find a solution to reduce the costs involved in terms of time and money to train new AM operators more quickly and efficiently.

Solution

A system was created that was capable of standardizing the metal AM workflow in a comprehensive format to quickly and efficiently train new operators. This was accomplished by implementing an AI-powered AR training simulation for metal AM that:

- Utilized an AI system for text recognition and object detection

- Used an innovative, immersive training environment using an AR headset

- Supported the delivery of just-in-time guidance

- Provided contextual and personalized feedback

- Capable of working with any AR headset and for any metal 3D printer

An AI algorithm was developed to simulate the same.

Key Features

- Augmented Reality

- AI powered

- Text Recognition

- Object Detection

- Personalized Feedback

Development

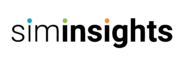

CAD software was used to create the Renishaw AM400 digital twin; Solidworks was used for its full configuration and Fusion 360 was utilized for its RBV configuration.

Precise measurements of the most commonly interactive parts were taken like doors, buttons and build areas. External equipment like chiller, dryer and argon tank were modeled as well.

Implementation & Integration

The digital twin was uploaded into the virtual environment of HyperSkill and a step-by-step training procedure was created which was capable of including pictures/videos to facilitate the learning process. Computer Vision was used to detect the button pressed by the user.

For the AR visualization, Hololens 2 was used and HyperSkill was downloaded into the headset, which allowed the trainee to experience the simulation. The aim was for the trainee to first scale and fix the Renishaw AM400 digital twin to the room and this was done using Hololens 2 finger and hand gestures.

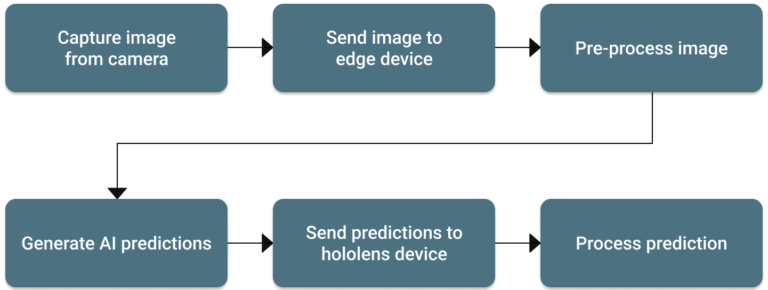

Process flow of AI system

The Computer Vision AI system interfaced with the HyperSkill app to provide immediate user feedback. At a given prompt, the Hololens captured an image from the Hololens camera which was sent to the web app for AI processing. The AI prediction result was then sent back to Hololens for user feedback.

Training Procedure

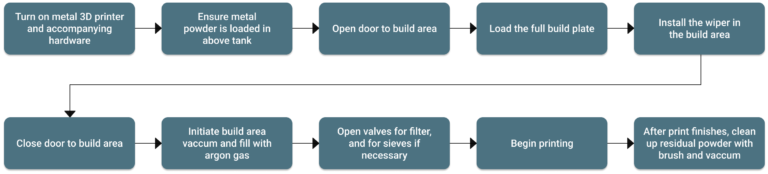

A high-level flowchart of the standardized process was developed for the full and RBV configuration. This was reviewed by an experienced operator to ensure that all steps are correct and in order. A detailed, low-level flowchart was also developed for implementation into HyperSkill.